Connectivity is Key to the New Standard

As the SOSA Technical Standard progresses, the recent herculean efforts undertaken by the technical working groups have elevated the standard even further. The additions to Snapshot 3 further define system realizations in accordance with the MOSA directive from the DoD and other government agencies.

Although several factors in systems design, network speeds and power supply requirements have been examined and defined as the SOSA initiative nears its first release, one of the areas most impacted is connectivity. With SOSA Release 1.0 slated for mid-2021, it’s important to take a look at the evolution of the connectivity through Snapshots 1, 2 and 3, paying special attention to the accommodations made for speed increases and data throughput to meet the growing bandwidth needs of applications.

Network Needs in Systems Aligned to SOSA

Ethernet has evolved in its capability to allow for high speed, low latency transfer of data, and can now move data quickly with priority, replacing interfaces like PCI Express for data plane communication. It has become the primary network technology specified for use in SOSA systems. It supports many of the goals in SOSA including configurability, interoperability and is widely used. (Figure 1)

In fact, SOSA relies extensively on Ethernet networks to pass information between SOSA modules, and currently supports high speed 1/10/40 Gb Ethernet (with 100 Gb in development) as well as low latency and deterministic transfer of data. High speed switches are used to implement Ethernet plug-in card switches.

SOSA systems are comprised of SOSA modules that must communicate. This means that SOSA module interconnects provide facilities to pass information between SOSA modules and physical network interconnects are defined to pass the information between SOSA plug-in cards.

Because the SOSA standard defines plug-in card hardware by OpenVPX VITA 65.0 and VITA 65.1, OpenVPX backplanes implement slots to accept plug-in cards and allow for interconnection between SOSA hardware entities to implement the necessary networks required in SOSA systems.

Network connections are implemented over the in the interconnected pipes found in the backplane, and the pipes are logically organized into various planes in OpenVPX. These connections may be physically implemented in copper in the backplane or externally via fiber optics.

System Requirements Defined by Industry Parameters

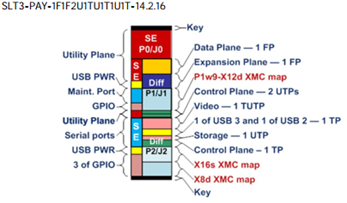

Although initial targets of the snapshots incorporated high degrees of network speed, as we prepare for the first release of SOSA, it’s evident that the need to meet these increasing throughout needs has resulted in shifts to the connectivity requirements in SOSA systems. (Figure 2).

The need for this increased performance has influenced several connectivity design factors

Ensuring Connectivity as the SOSA Ecosystem Builds

Given the nature of SOSA, designed to foster interoperability across manufacturers and product technologies, ensuring connectivity within a system is critical.

One notable industry event that clearly demonstrated the strength of the SOSA ecosystem as well as helped validate the interoperability of the SOSA aligned components was the first Tri-Service Open Architecture Interoperability Demonstration (TSOA-ID) event for the media, acquisition community and industry influencers, hosted in January 2020 by the Georgia Tech Research Institute in Atlanta, Georgia.

Elma’s SOSA and CMOSS Aligned 12-Slot 3U Development Platform served as the heart of joint efforts by five SOSA Consortium member companies to build and test a fully functioning system aligned to SOSA. These industry partners, that participated in the demo using Elma’s development platforms included Behlman Power, Concurrent Technologies, Crossfield Technology, Curtiss Wright, Interface Concept and Spectranetix (a Pacific Defense company).

Connectivity needs in systems aligned to SOSA have kept pace with changing market demands, even during the initial stages of the standard’s development. Ensuring reliable throughput for high network speeds creates a path for continued strengthening of SOSA across all branches of the DoD.

Learn more in this informative webinar “How the SOSA Technical Standard Implements Highly Standardized, Configurable and Interoperable Network Communications Protocols”, hosted by Military & Aerospace Electronics, and experts from Elma Electronic, Interface Concept and Pentek.